Purposeful AI for shared prosperity

Today’s realities of Artificial Intelligence highlight the need to rethink value creation, leadership competences and governance systems. Therefore, CEC has launched a new work programme on AI with a webinar on „Designing Artificial Intelligence for People, Planet and Prosperity.” The session, with AI-expert Nicolas Blanc, hosted rich insights on the role of managers, social dialogue and regulation for making AI fit-for-purpose and trustworthy.

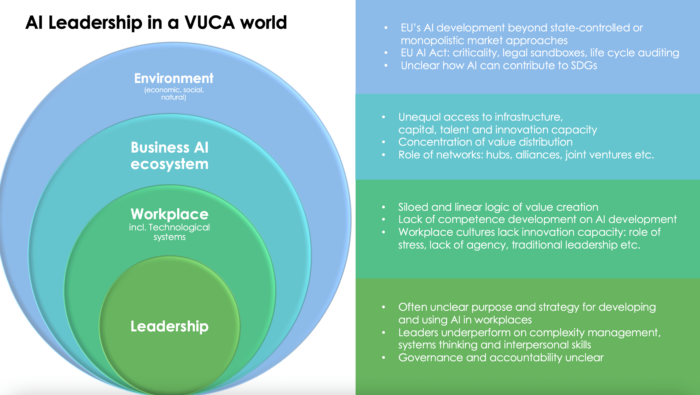

The contemporary Artificial Intelligence landscape is marked by competing visions on its role for the economy. Beyond state-controlled or monopolistic trends, the EU has chosen to develop a values-based approach for its AI agenda. With the dominance of actors from China and the USA, the EU has all interest in defining an own competitive advantage grounded in the needs of its consumers, citizens, workers, and businesses. According to the JRC, the EU has its strengths in the areas of AI Services and Robotics, as well as in AI R&D.

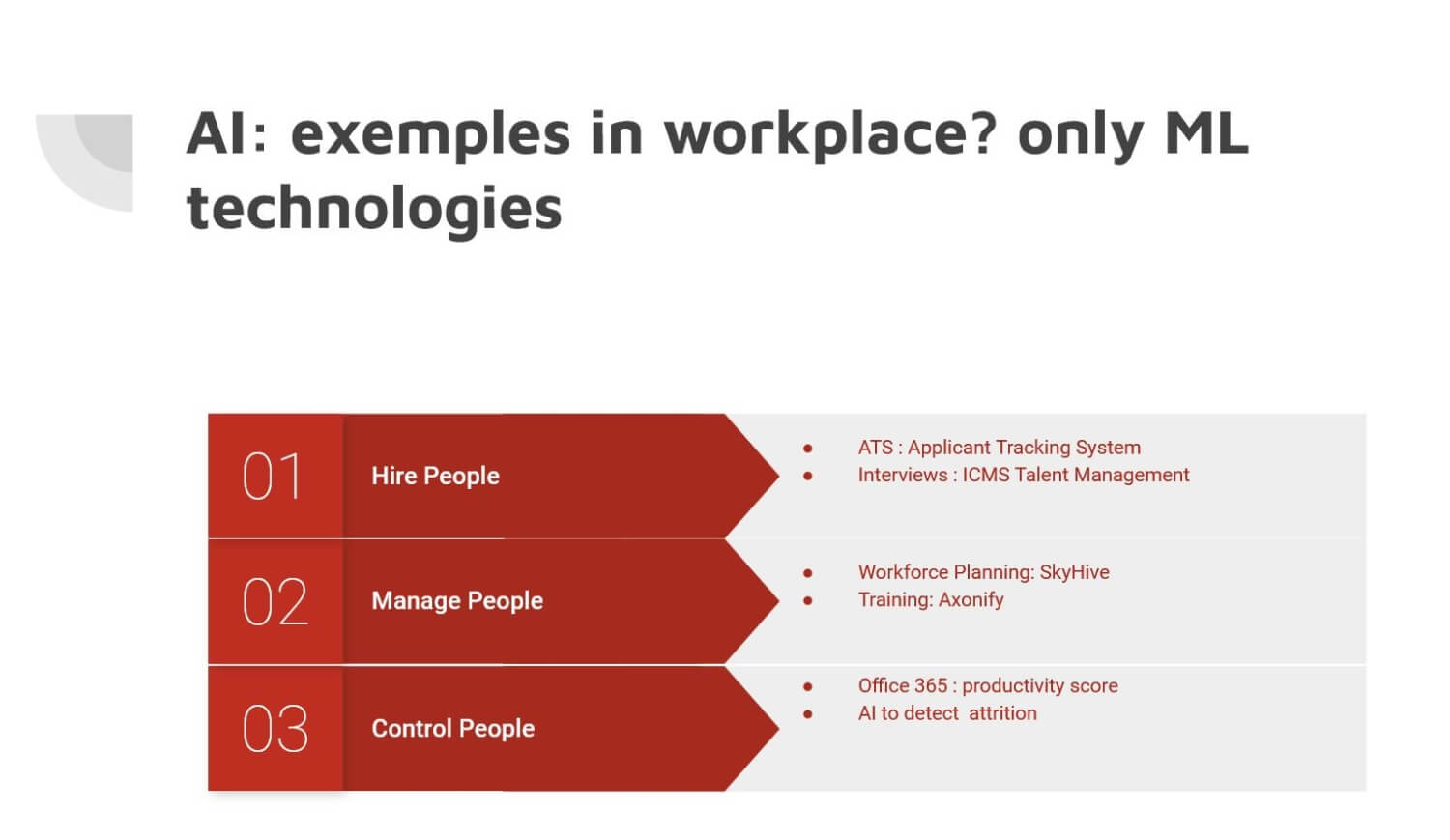

The workplace is the source of AI value creation

The insights from the webinar on 30 January 2023 made clear that the world of work and leadership have been the underlooked dimension of making AI a success. The workplace is the source of human innovation and purposeful business development. It is located at the crossroads of business ecosystems, innovation infrastructure and regulatory landscapes. Key to making AI a success in workplaces is professional management and sustainable leadership.

Making workplaces sources of innovation

Overall, rather than replacing human labour, AI can make work both more exploitative or more enhanced. To ensure that the right framework for innovation, shared value distribution and long-term success are created, leaders need to steer through the complex realities of AI development. Many workplaces today are characterized by a siloed and linear logic of value creation, the lack of human (leadership) competences, a lack of innovation culture, high levels of stress and a lack of human agency.

Rethinking AI governance

When it comes to regulation, Nicolas Blanc highlighted, most measures in place remain voluntary soft law. The upcoming AI Act may introduce a top-down logic limiting high-risk applications (with auto-assessment) and integrating a “time-to-market” approach with regulatory sandboxes. In his opinion, the EU needs to complement that with a bottom-up approach through more social dialogue and a multi-stakeholder involvement in value creation processes. Human workers and managers need to become driving forces. He presented the ”Value Radar” tool to monitor shared value distribution of AI as one of the outcomes of the “SECOIA DEAL” EU project that aimed to reestablish trust in AI through dialogue

A sustainable purpose for AI

Lastly, it is worth to reflect on the purpose of developing AI as leaders in public and private sector organisations. Rather than following a path that aims to enter into competition with machines, it is up to humans to design AI systems for its purposes, for the economy, society and nature. With the climate emergency already posing great threats to supply chains and human lives, AI technologies must be responsible. Particularly Deep Learning technologies are energy-intensive with high levels of emissions.

For instance, GPT-3, OpenAI’s sophisticated natural language model used 1.3 Gw and produced metric 552 metric tons of carbon dioxide emissions. This is roughly equivalent to driving a car 1.3 million miles – in one hour. Upon trial, ChatGPT was not able to explain its own carbon footprint, referring to it as being “too complex” – a poor result if AI is to become a solution to the even more complex climate emergency. Looking at the social impact, the “TIME” story about 50 000 Kenyan workers daily categorizing cases of violence for less than 2$ an hour for Open AI may also not be exemplary for a sustainable business model.

More information

PowerPoint presentation of webinar